Overview of Diffsketch

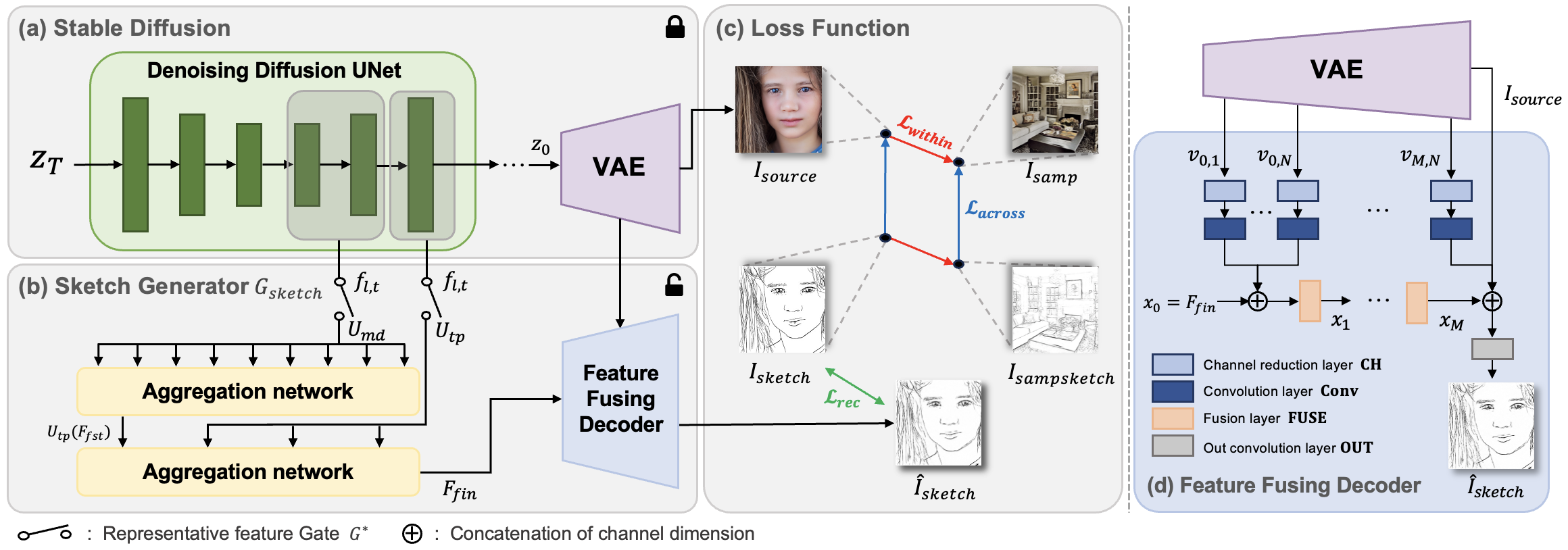

The UNet features generated during the denoising process are fed to the Aggregation networks to be fused with the VAE features to generate a sketch corresponding to the image that Stable Diffusion generates.

We introduce DiffSketch, a method for generating a variety of stylized sketches from images. Our approach focuses on selecting representative features from the rich semantics of deep features within a pretrained diffusion model. This novel sketch generation method can be trained with one manual drawing. Furthermore, efficient sketch extraction is ensured by distilling a trained generator into a streamlined extractor. We select denoising diffusion features through analysis and integrate these selected features with VAE features to produce sketches. Additionally, we propose a sampling scheme for training models using a conditional generative approach. Through a series of comparisons, we verify that distilled DiffSketch not only outperforms existing state-of-the-art sketch extraction methods but also surpasses diffusion-based stylization methods in the task of extracting sketches.

The UNet features generated during the denoising process are fed to the Aggregation networks to be fused with the VAE features to generate a sketch corresponding to the image that Stable Diffusion generates.

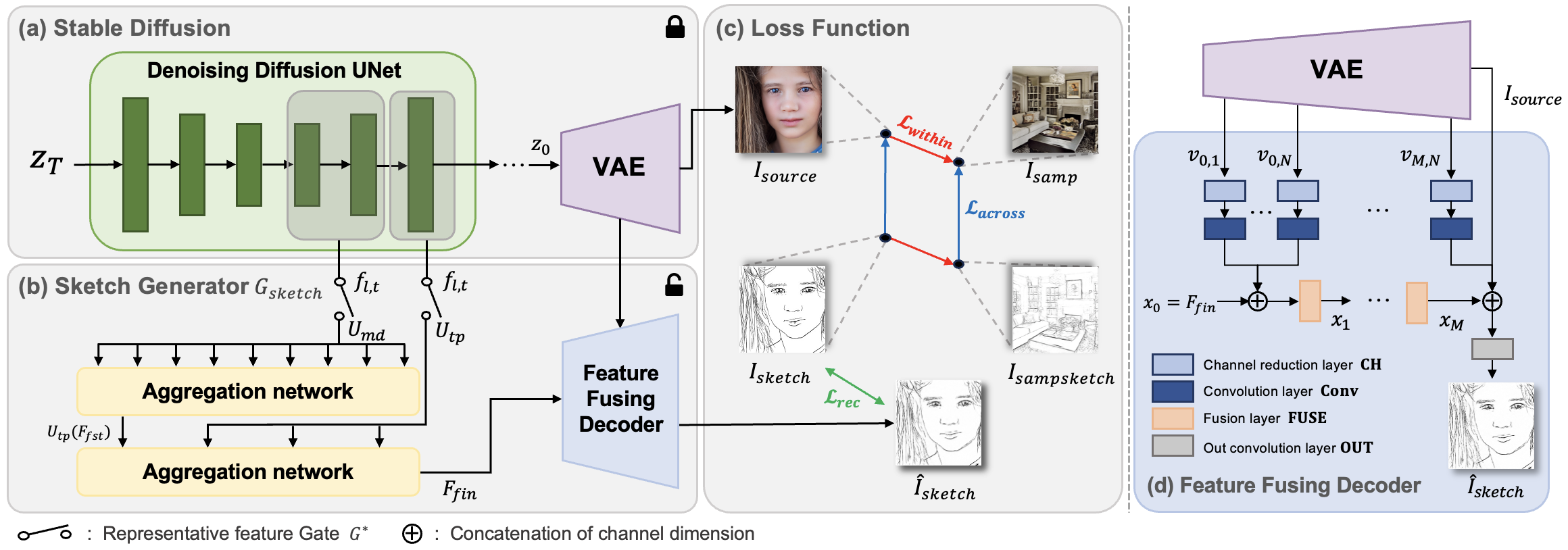

PCA is applied on DDIM sampled features from different classes. (a): features colored with human-labeled classes. (b): features colored with denoising timesteps.

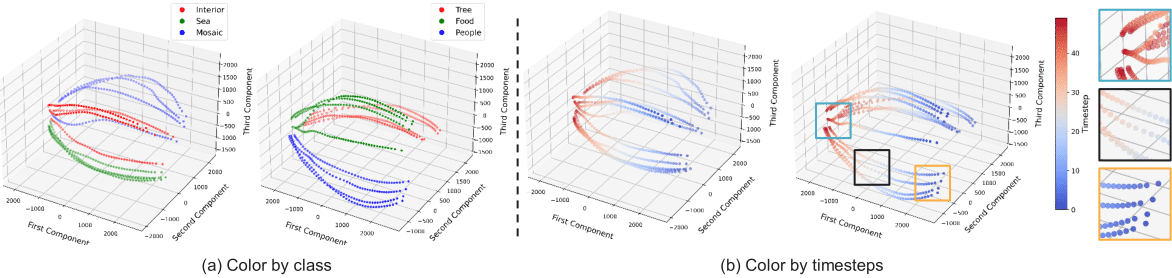

Up: features colored with human-labeled classes. Down: features colored with denoising timesteps.

@article{aaa

title={DiffSketch: Representative Feature Extraction During Diffusion Process for Sketch Extraction with One Example},

author={Anonymous},

year={2024}

}